Keeping IT systems running smoothly isn’t just about having the right tools—it’s about knowing what to watch, when to act, and how to prevent small issues from becoming major incidents. This comprehensive guide explores IT monitoring best practices and actionable strategies for building robust infrastructure monitoring systems that help IT teams prevent downtime, optimize performance, and maintain business continuity across increasingly complex technology environments.

Most organizations implement basic monitoring reactively after incidents occur, scrambling to add visibility after experiencing costly outages or performance degradations. This reactive approach fails to address the fundamental challenges of modern infrastructure complexity. Effective IT infrastructure monitoring requires proactive strategies that align with business objectives, scale with organizational growth, and adapt to evolving technology landscapes rather than simply responding to problems after they’ve already impacted users and revenue.

What is IT Infrastructure Monitoring

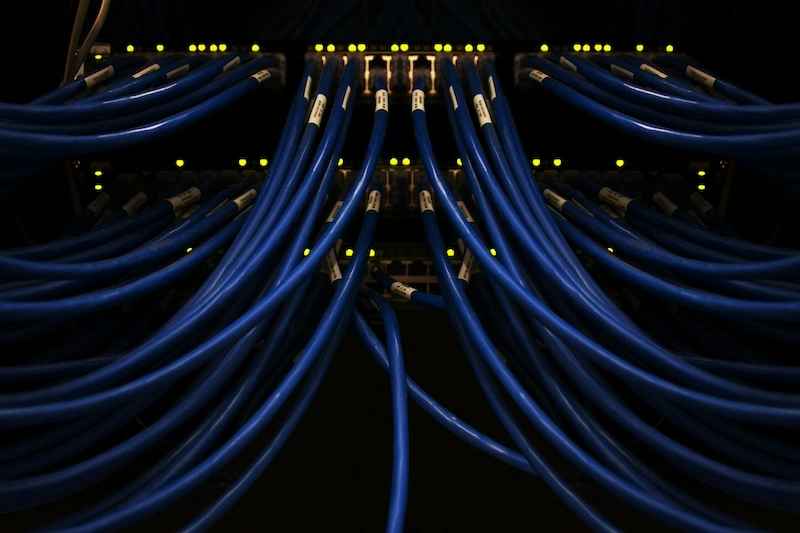

IT monitoring refers to the continuous observation and analysis of technology systems to ensure optimal performance, availability, and reliability. At its core, infrastructure monitoring involves tracking the health and performance of servers, networks, applications, databases, and cloud resources that support business operations. This goes beyond simple uptime checks—comprehensive monitoring provides deep visibility into system behavior, resource utilization, and performance trends that inform both immediate incident response and long-term capacity planning.

The distinction between monitoring, observability, and alerting often confuses IT teams. Monitoring collects and displays metrics about system state, observability provides the ability to understand system behavior through those metrics and logs, while alerting notifies teams when metrics exceed defined thresholds. Effective IT infrastructure monitoring combines all three elements to create a complete picture of infrastructure health and behavior.

Reactive monitoring fails in complex environments because modern infrastructure changes constantly—new containers spin up, microservices communicate across distributed systems, and cloud resources scale dynamically. By the time reactive monitoring detects a problem, cascading failures may have already spread across interconnected systems, turning a minor issue into a major incident that impacts multiple services and customers.

Types of IT Infrastructure Monitoring

Comprehensive infrastructure monitoring requires coverage across multiple layers of technology stack:

- Network monitoring tracks bandwidth utilization, latency, packet loss, and connection quality to ensure reliable communication between systems and users

- Server monitoring watches CPU usage, memory consumption, disk I/O, and running processes to prevent resource exhaustion and performance degradation

- Application performance monitoring (APM) goes deeper than infrastructure metrics to track response times, error rates, transaction volumes, and code-level performance across distributed applications

- Database monitoring focuses on query performance, connection pools, replication lag, and storage consumption to prevent data layer bottlenecks

- Cloud infrastructure monitoring adapts traditional approaches for dynamic environments like AWS, Azure, and GCP where resources appear and disappear based on demand

- End-user experience monitoring completes the picture by measuring actual user interactions rather than just backend system health

IT Monitoring Best Practices: Foundation

Establishing Baselines and Scope

Establishing baseline performance metrics provides the foundation for effective IT infrastructure monitoring. Without understanding normal behavior, teams can’t distinguish between routine fluctuations and genuine problems. Collect several weeks of data across different time periods—business hours versus overnight, weekdays versus weekends, month-end processing versus typical days—to establish realistic baselines that account for natural variation in system behavior.

Defining what to monitor versus monitoring everything prevents both gaps in visibility and overwhelming noise. Start with business-critical systems and work outward based on dependencies and impact:

- Business-critical services that directly affect customer experience or revenue generation deserve comprehensive monitoring across all layers

- Supporting infrastructure like databases, message queues, and authentication services need focused monitoring on performance and availability

- Development and testing environments require lighter monitoring focused on resource utilization and cost control

- Edge cases and rarely-used features may not warrant real-time monitoring but should still have basic health checks

Alert Management

Alert fatigue prevention and intelligent thresholds require careful tuning based on baseline behavior and business impact. Static thresholds like “CPU above 80%” generate false positives during legitimate traffic spikes while missing gradual degradation. Dynamic thresholds that adapt to time-of-day patterns, trending growth, and historical variance catch real problems while reducing noise that causes teams to ignore notifications.

Documentation and runbook creation transform monitoring from data collection into actionable intelligence. Every alert should link to a runbook explaining what the alert means, what business impact it indicates, how to investigate further, and step-by-step remediation procedures. This documentation enables faster response during incidents and helps junior team members contribute effectively to problem resolution.

Common Monitoring Mistakes to Avoid

Over-monitoring leading to noise and ignored alerts represents the most common pitfall in IT monitoring implementations. Teams deploy monitoring tools with default configurations that generate hundreds of alerts, most representing normal conditions or low-impact issues. Within weeks, teams begin ignoring notifications, missing critical alerts buried in the noise. Effective IT infrastructure monitoring best practices emphasize quality over quantity—fewer, more meaningful alerts that always warrant attention.

Monitoring without actionable response procedures wastes resources collecting data that never drives improvements. Every monitored metric should connect to either an automated response or a documented human procedure. If no action would result from an alert, question whether that metric needs monitoring at all. Siloed monitoring tools creating visibility gaps fragment infrastructure visibility across multiple dashboards and alert streams, while neglecting to monitor monitoring systems themselves creates blind spots where monitoring failures go undetected.

Designing Your Monitoring Architecture

Architectural Approaches and Collection Models

Centralized versus distributed monitoring approaches each offer distinct advantages. Centralized monitoring simplifies management and provides unified visibility but creates single points of failure and bandwidth bottlenecks. Distributed monitoring improves resilience and reduces network traffic but complicates management and data aggregation. Hybrid approaches often work best—distributed collectors feeding centralized analytics and visualization platforms.

Push versus pull monitoring models affect scalability and network design. Pull models where monitoring systems query targets work well for stable infrastructure but struggle with dynamic environments where endpoints appear and disappear frequently. Push models where systems send metrics to collectors scale better for cloud-native architectures but require careful queue management to prevent data loss during network issues.

Agent-based versus agentless monitoring trade-offs involve visibility depth versus deployment complexity. Agents installed on monitored systems provide detailed metrics and can execute local checks, but require deployment, updates, and resource overhead on every monitored system. Agentless monitoring via SNMP, APIs, or log parsing reduces deployment burden but often provides less detailed metrics.

IT Infrastructure Monitoring Best Practices for Scalability

Hierarchical monitoring structures for enterprise environments prevent overwhelming central systems with data from thousands of endpoints. Regional collectors aggregate and pre-process data before forwarding summaries to central systems, reducing network bandwidth and storage requirements while maintaining drill-down capabilities when detailed investigation becomes necessary.

Auto-discovery and dynamic inventory management become essential as infrastructure scales beyond manual tracking. Modern IT monitoring tools automatically detect new systems, identify their type and role, apply appropriate monitoring templates, and remove decommissioned resources without manual intervention. This automation prevents monitoring gaps as infrastructure evolves.

Monitoring containerized and microservices architectures requires fundamentally different approaches than traditional server monitoring. Containers live for minutes or hours rather than months, microservices communicate through complex mesh networks, and workloads shift across clusters dynamically. Effective monitoring tracks service endpoints rather than individual containers, correlates distributed traces across service boundaries, and adapts to constantly changing infrastructure topology.

Alert Strategy and Notification Design

Severity classification and escalation paths prevent alert overload while ensuring critical issues receive immediate attention:

- P1 critical alerts page on-call engineers immediately for service outages affecting customers, requiring immediate response regardless of time

- P2 high alerts create incidents during business hours but wait until morning for overnight occurrences, indicating significant issues without immediate customer impact

- P3 medium alerts generate tickets for investigation within 24-48 hours, representing problems that need attention but aren’t urgent

- P4 low alerts feed into weekly review meetings for trend analysis and continuous improvement rather than immediate action

Correlation and suppression to reduce noise represent advanced IT infrastructure monitoring best practices that dramatically improve signal-to-noise ratio. When a core router fails, hundreds of dependent systems become unreachable—suppress those downstream alerts and focus on the root cause. Context-rich alerts versus raw metric notifications make the difference between actionable intelligence and cryptic numbers. Integration with incident management platforms like PagerDuty or Opsgenie connects monitoring to formalized incident response workflows, handling on-call scheduling, escalation, and acknowledgment tracking.

Integration and Automation

ITSM tool integration with ServiceNow or Jira Service Management bridges the gap between IT monitoring alerts and formalized IT service management processes. Automated ticket creation from alerts, bidirectional status updates, and resolution tracking create seamless workflows from detection through resolution and post-incident review.

ChatOps and collaboration platform notifications through Slack or Teams bring monitoring visibility into team communication channels. Engineers see alerts in context of ongoing discussions, can acknowledge incidents without leaving their primary workspace, and collaborate naturally on problem resolution.

Automated remediation and self-healing systems represent the pinnacle of mature IT infrastructure monitoring best practices. When disk space runs low, automatically clean up log files. When application pools become exhausted, automatically scale resources. When services become unresponsive, automatically restart them and escalate only if automated remediation fails.

Continuous Improvement Framework

Regular review and optimization of monitoring coverage prevents stagnation as infrastructure evolves. Quarterly reviews should assess whether monitoring covers new systems, whether alerts still reflect current priorities, and whether response procedures remain accurate. Post-incident reviews and monitoring gap analysis transform incidents into learning opportunities—after every significant incident, ask whether monitoring detected the issue promptly, whether alerts were clear about business impact, and where improvements could help catch similar issues faster.

Metrics for measuring monitoring effectiveness help justify continued investment and identify improvement opportunities. Track MTTD and MTTR trends over time, measure alert accuracy, and survey teams about monitoring usefulness. These metrics demonstrate monitoring value while highlighting areas needing attention.

Conclusion

IT infrastructure monitoring best practices rest on three critical pillars: establishing comprehensive monitoring coverage across all critical systems, implementing intelligent alerting and response procedures that drive action without overwhelming teams, and continuously optimizing based on business needs and infrastructure evolution. Teams should start with critical systems, prove value through reduced downtime and faster incident resolution, then expand coverage systematically. Organizations implementing structured IT monitoring best practices consistently see 60% faster incident resolution and 45% reduction in unplanned outages compared to reactive monitoring approaches.